The wall

Most things I build these days have some kind of AI integration, and the same question keeps coming up: which model should I actually use? There are comparison tools baked into various platforms, but nothing standalone, nothing free, and nothing that lets you test with your own files and prompts without jumping through hoops.

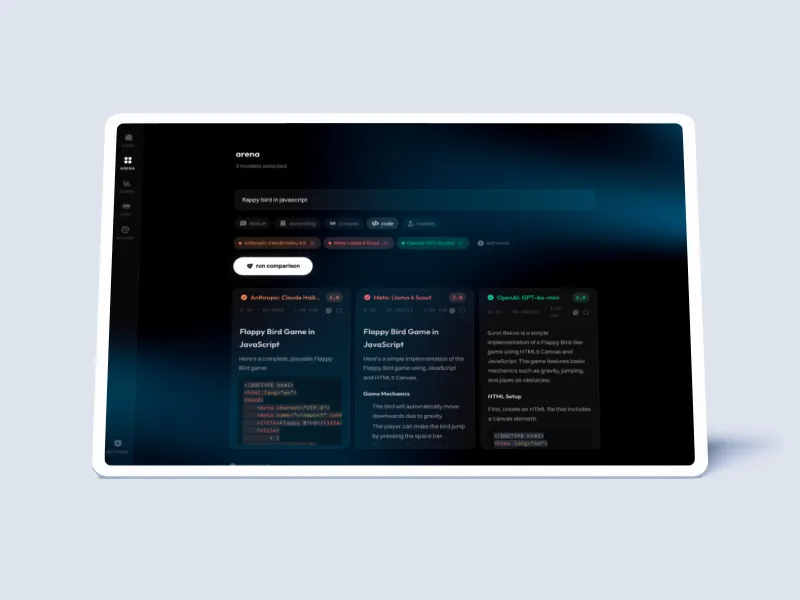

I just wanted a simple way to throw a real task at a bunch of models and see who does it best.

How it works

Pick your models, write a prompt, optionally upload files and schemas, hit run. Every model streams its response side by side in real time, colour-coded so you can tell them apart. When they finish, an AI judge reads all the responses blind and scores them on accuracy, clarity, and completeness. Scores accumulate on a leaderboard across runs.

It tracks cost per response down to fractions of a cent. Enough information to make an actual informed decision about which model fits your budget and your use case.

Under the hood

Next.js 16 with App Router, Tailwind, and Framer Motion for the UI. OpenRouter as the LLM gateway, giving one API key access to every provider. Streaming works through Server-Sent Events: the server opens a parallel fetch to each model, tags every chunk with its model ID, and multiplexes them down a single stream. Deployed on Cloudflare Workers under 2 MiB. No database, no auth, no sign-up. Paste a key and go.

The SSE multiplexing was the real puzzle. One client request fans out to N models on the server, and all those chunks need to interleave back through a single stream without dropping tokens or mixing up IDs. Getting that reliable took most of the build time. The judge shuffles responses before scoring so position in the prompt doesn’t bias results, then maps structured JSON scores back to the original model IDs.